Halo: ODST Content Officially on PS5 in Next Week’s Helldivers 2 Update

Halo armour, weapons, and more on PS5

- by Liam Croft

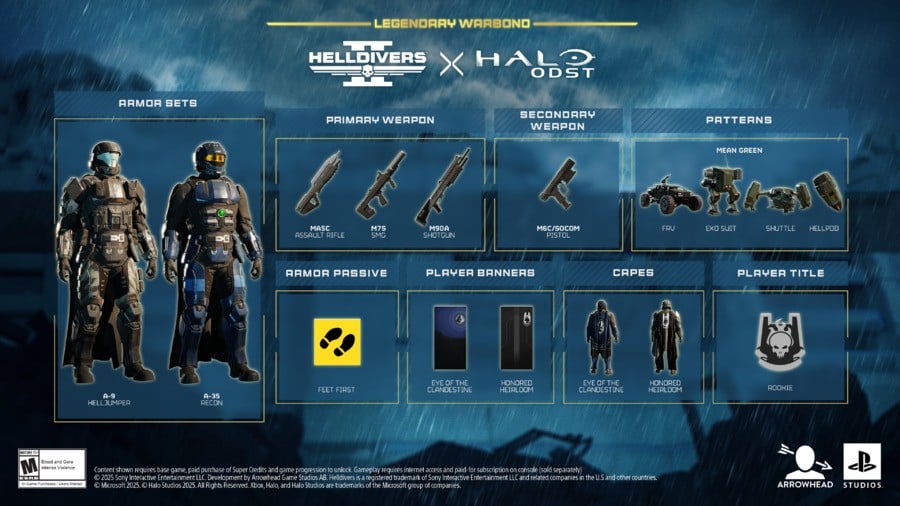

Following last week’s tease, it’s now been confirmed that content from Halo 3: ODST will be arriving in Helldivers 2 on the same day the game’s Xbox Series X|S port launches, 26th August. In the form of a Legendary Warbond, you can see what’s in store in the trailer above.

The collaboration brings with it the following to Helldivers 2:

- MA5C Assault Rifle

- M6C / SOCOM Pistol

- M90A Shotgun

- M7S SMG

- A-9 Helljumper Armor Set

- A-35 Recon Armor Set

There’ll also be an assortment of new capes, player banners, passive abilities, patterns, and player titles. The Legendary Warbond will cost 1,500 Super Credits when it launches next week, as it represents “a step up from previous Warbond tiers, and packed with gear worthy of the legend”.

This is the first time Halo video game content has ever appeared on PS5, and drops on the very same day Gears of War: Reloaded makes its way to Sony’s current-gen system, 26th August.

Will you be dressing up as an ODST next week in Helldivers 2? Let us know in the comments below.

![]()

Liam grew up with a PlayStation controller in his hands and a love for Metal Gear Solid. Nowadays, he’s found playing the latest and greatest PS5 games as well as supporting Derby County. That last detail is his downfall.