Hobbyists discover how to insert custom fonts into AI-generated images

Letters, we get letters —

Like adding custom art styles or characters, in-world typefaces come to Flux.

Enlarge / An AI-generated example of the Cyberpunk 2077 LoRA, rendered with Flux dev.

Last week, a hobbyist experimenting with the new Flux AI image synthesis model discovered that it’s unexpectedly good at rendering custom-trained reproductions of typefaces. While far more efficient methods of displaying computer fonts have existed for decades, the new technique is useful for AI image hobbyists because Flux is capable of rendering depictions of accurate text, and users can now directly insert words rendered in custom fonts into AI image generations.

We’ve had the technology to accurately produce smooth computer-rendered fonts in custom shapes since the 1980s (1970s in the research space), so creating an AI-replicated font isn’t big news by itself. But a new technique means you could see a particular font appear in AI-generated images, say, of a chalkboard menu at a photorealistic restaurant or a printed business card being held by a cyborg fox.

Shortly after the emergence of mainstream AI image synthesis models like Stable Diffusion in 2022, some people began wondering: How can I insert my own product, clothing item, character, or style into an AI-generated image? One answer that emerged came in the form of LoRA (low-rank adaptation), a technique discovered in 2021 that allows users to augment knowledge in an AI base model with modular add-ons that have been custom-trained.

-

An example of the Cyberpunk 2077 LoRA, rendered with Flux dev.

-

An example of the Cyberpunk 2077 LoRA, rendered with Flux dev.

-

An example of the Cyberpunk 2077 LoRA, rendered with Flux dev.

-

An example of the Cyberpunk 2077 LoRA, rendered with Flux dev.

These LoRAs, as the modules are called, allow image synthesis models to create new concepts not originally found (or poorly represented) in the foundation model’s training data. In practice, image synthesis hobbyists use them to render unique styles (say, everything in chalk art) or subjects (detailed images of Spider-Man, for instance). Each LoRA has to be specially trained using examples provided by the user.

Until Flux, most AI image generators weren’t very good at rendering accurate text within a scene. If you prompted Stable Diffusion 1.5 to render a sign that said “cheese,” it would return gibberish. OpenAI’s DALL-E 3, released last year, was the first mainstream model to do text fairly well. Flux still makes mistakes with words and letters at times, but it’s the most capable AI model at rendering “in-world text” (you might call it) we’ve seen so far.

Since Flux is an open model available for download and fine-turning, this past month has been the first time training a typeface LoRA might make sense. That’s exactly what an AI enthusiast named Vadim Fedenko (who did not respond to a request for an interview by press time) discovered recently. “I’m really impressed by how this turned out,” Fedenko wrote in a Reddit post. “Flux picks up how letters look in a particular style/font, making it possible to train Loras with specific Fonts, Typefaces, etc. Going to train more of those soon.”

-

An example of the first Flux typeface LoRA, Y2K.

-

An example of the Y2K LoRA.

-

An example of the Y2K LoRA.

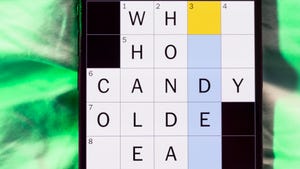

For his first experiment, Fedenko chose a bubbly “Y2K” style font reminiscent of those popular in the late 1990s and early 2000s, publishing the resulting model on the Civitai platform on August 20. Two days later, a Civitai user named “AggravatingScree7189” posted a second typeface LoRA that reproduces a font similar to one found in the Cyberpunk 2077 video game.

“Text was so bad before it never occurred to me that you could do this,” wrote a Reddit user named eggs-benedryl when reacting to Fedenko’s post on the Y2K font. Another Redditor wrote, “I didn’t know the Y2K journal was fake until I zoomed it.”

Is it overkill?

It’s true that using a deeply trained image synthesis neural network to render a plain old font on a simple background is probably overkill. You probably wouldn’t want to use this method to replace Adobe Illustrator while designing a document.

“This looks good but it’s kinda funny how we’re reinventing the idea of fonts as 300MB LoRAs,” wrote one Reddit commenter on a thread about the Cyberpunk 2077 font.

Generative AI is often criticized for its environmental impact, and it’s a valid concern for massive cloud data centers. But we find that Flux can insert these fonts into AI-generated scenes while running locally on an RTX 3060 in a quantized (size-reduced) form (and the full dev model can run on an RTX 3090). It’s similar electricity consumption to playing a video game on the same PC. The same goes for LoRA creation: The creator of the Cyberpunk 2077 font trained the LoRA in three hours on a 3090 GPU.

There are also ethical issues with using AI image generators, such as how they are trained on harvested data without content owner consent. Even though the technology is divisive among some artists, a large community of people use it every day and share the results online through social media platforms like Reddit, which leads to new applications of the technology like this one.

As of this writing, there are only two custom Flux typeface LoRAs, but we’ve already heard plans of people creating more as we write this. While it’s still in its earliest stages, the technique of creating typeface LoRAs may become foundational if AI image synthesis becomes more widely deployed in the future. Adobe, with its own image synthesis models, is likely watching.